Tasks you can perform on the page or step-by-step instructions for performing a particular task.Ī breadcrumb trail that takes you back to the Getting Started dialog.

#Spark ui browser software#

View the distribution info for the following client software provided by Snowflake:Ĭonnector for Spark v2.0 and higher - Connector for Spark v1.5 and v1.6 has been deprecatedĬonnector for Python (and related components)ĭisplays context-sensitive help for the current page, including: These credentials are distinct/separate from your Snowflake user credentials.ĭownload the following client software provided by Snowflake: You must log into the portal using your Snowflake Community user credentials. Once there, you can freely browse the articles and discussions in the site, but to submit cases or participate in discussions, SPARKHOME/bin/spark-class.cmd .history.HistoryServer Monitor the Spark Application. Opens the Snowflake Support Portal/Community in a new tab/window. If you are running Spark on windows, you can start the history server by starting the below command. Opens the documentation in a new tab/window. From the dropdown menu, choose one of the following actions: To access any of these resources at any time, click the Help icon in the upper right.

#Spark ui browser drivers#

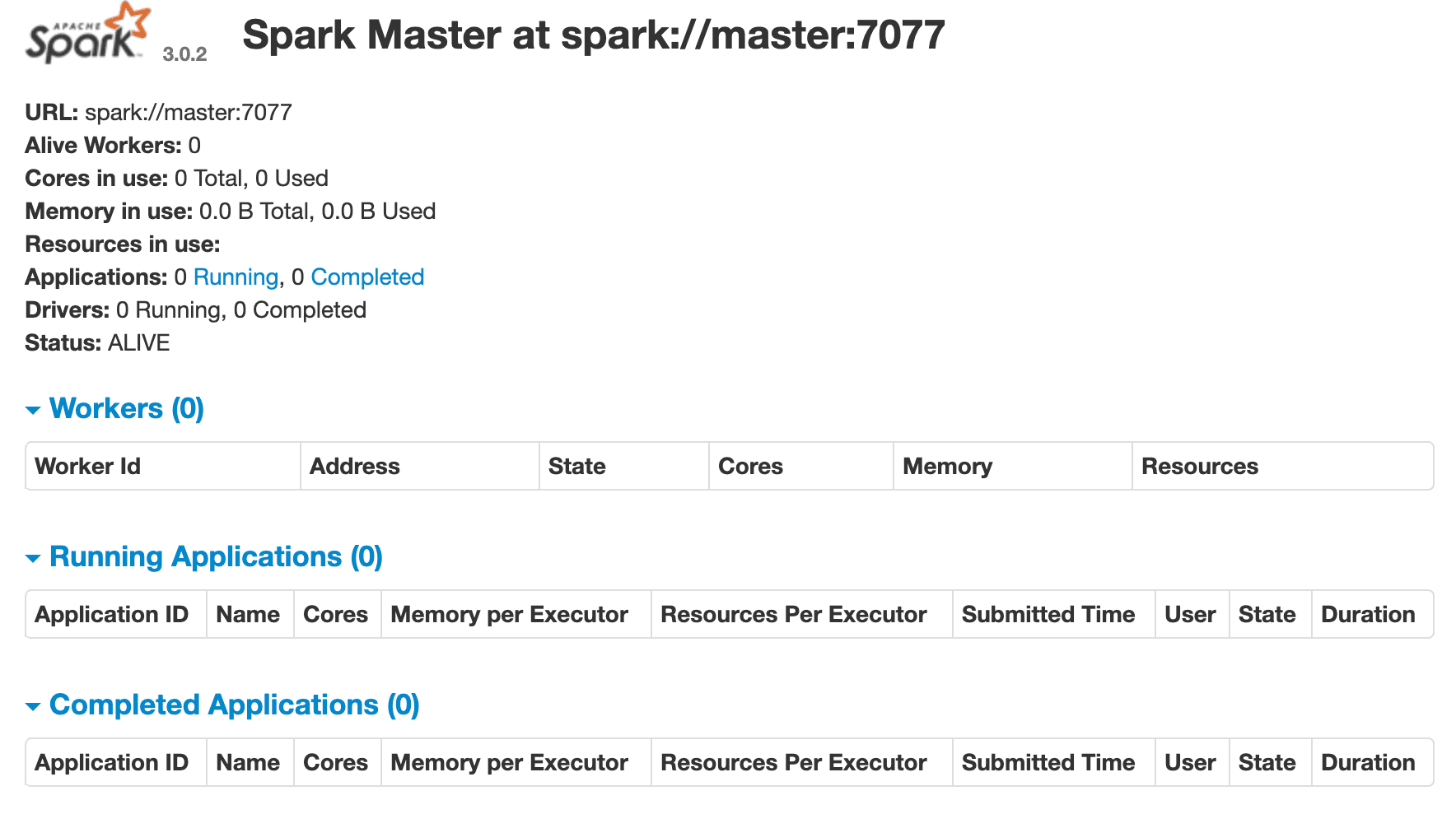

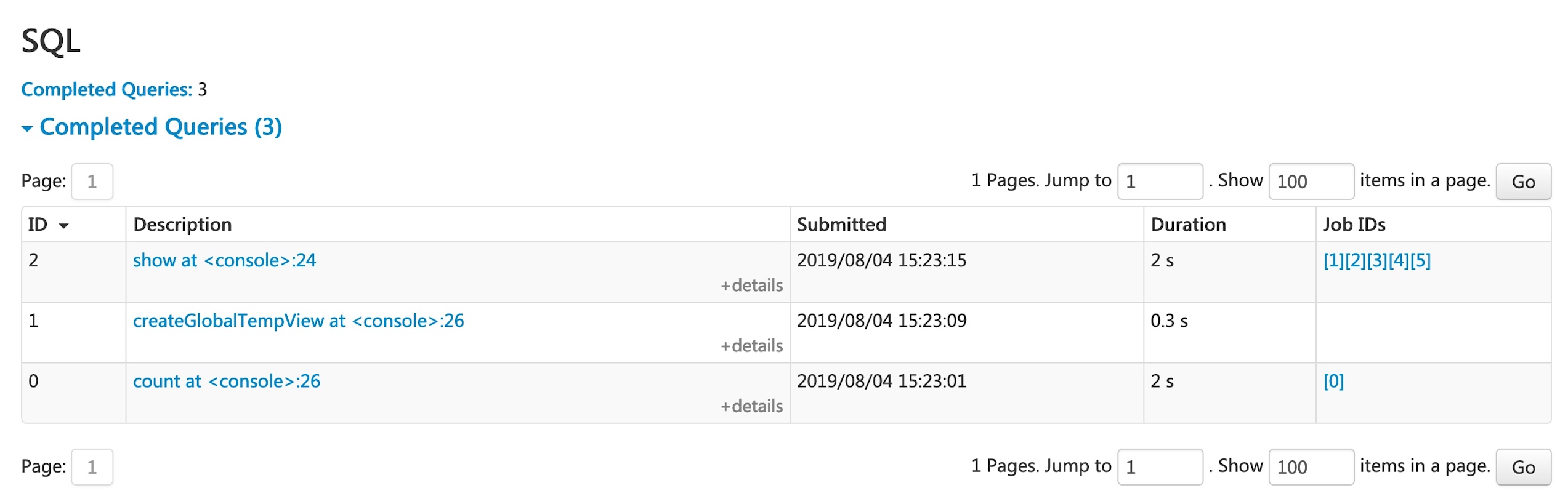

Those missing features are not compatible with the platform.Accessing Documentation, Support, Downloads, and Context-sensitive Help ¶ The Spark drivers web UI is to show the progress of your computations, while standalone Masters web UI is to let you.

When referring to other Spark UI documentation references, you may see discussions of features that are missing here. But we think with Spark we can apply off the shelf and open source. To address these challenges one solution is using custom rendering engines. There are more data points than possible pixels, and manipulating distributed data can take a long time. This means that there are some functions that might be available in a native Spark environment that are not available here. Visualizing big data in the browser using Spark. This should start the PySpark shell which can be used to interactively work. To test if your installation was successful, open a Command Prompt, change to SPARKHOME directory and type binpyspark. From now on, I will refer to this folder as SPARKHOME in this post. This version of the Spark UI has been modified to make it compatible with the platform. So all Spark files are in a folder called C:sparkspark-1.6.2-bin-hadoop2.6. The Executors tab also has useful information as shown in Figure 4.